Can ChatGPT read who you are?

Introduction

Exciting question, isn’t it? Or perhaps a scary one… Knowing that ChatGPT is exquisite in processing information and providing structured output, we looked into its ability to perform personality assessments and compared that ability to real-world psychologists. I think the results are interesting, and I’d like to share an overview of them in this post.

We put the results in a paper accepted to the Collaborative AI and Modeling of Humans (CAIHu) workshop at AAAI ’24:

E. Derner, D. Kučera, N. Oliver, and J. Zahálka: Can ChatGPT Read Who You Are? (CAIHu 2024)

So, let’s look at what ChatGPT can do when it puts the psychologist hat on, shall we? Then we can think about what bearing this may have on AI and even security going forward.

Personality estimation

As the personality estimation technique, we look at the Big Five inventory which seeks to gauge the presence of the following personality traits:

- Extraversion

- Agreeableness

- Conscentiousness

- Neuroticism (emotional lability)

- Openness to experience

Each assessed person, called subject, first fills in a 44-question self-assessment questionnaire. Then, a person close to the subject, called partner, fills in the same questionnaire assessing the subject. The subject then composes 4 short letters (180-200 words) on the following topics:

- Cover letter for a job the subject is really interested in.

- Letter from a vacation for a friend inviting them to join the subject.

- Complaint letter describing newly-arisen issues at the apartment building the subject lives in.

- Letter of apology to someone the subject had been close to for a long time.

Based on these four letters, two experts, expert A and expert B, provide their assessment of the subject’s personality trait. Finally, ChatGPT completes its assessment based on the letters, the assessment task description (“assign a score…”), and the definition of each of the five traits.

Each assessment (subject, partner, expert A, expert B, ChatGPT) results in assigning a low, medium, or high value to the subject in each of the Big Five traits. Our dataset comes from the CPACT research project, is in Czech, and features texts related to 155 subjects (77 men, 78 women) above the age of 15.

ChatGPT as a psychoanalyst

Before we start assessing ChatGPT’s psychoanalytic prowess, we have to decide whose assessment is it supposed to match. The established psychology practice, which was surprising to me at first, is that the self-assessment is the most accurate. Then I saw the discrepancy between the expert assessments, and I have been convinced. So essentially, with ChatGPT, we are trying to match the self-assessment values.

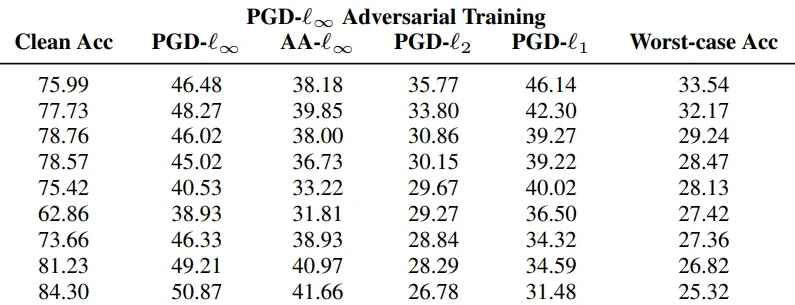

Figure 2 shows the agreement between the subject’s self-assessment and the rest, incl. various configurations of ChatGPT that differ in the way ChatGPT was prompted. We measure the agreement with a F1 score. Based on the results—and the paper contains a lot of them, incl. statistical tests—ChatGPT indeed is a solid “psychoanalyst”. On four out of the five personality traits, it scored either the highest or the second-highest behind the partner assessment, i.e., the assessment from the person that knows the subject intimately.

The answer to the main question is clear, but it’s good to bear in mind the following observations:

- ChatGPT has a positive bias. It skews the results towards socially desirable traits, which is in line with the LLMs’ tendency to provide neutral or positive outputs. This explains ChatGPT’s poor performance on neuroticism.

- Not all personality traits are created equal. Fig. 2 reveals quite some variance in the absolute agreement values between various traits: it is easier to find agreement in agreeableness (no pun intended) and extraversion, but neuroticism, openness, and conscientiousness are tougher. Natural language analysis is simply not a one-size-fits-all method to assess personality traits.

- ChatGPT’s performance is heavily prompt-dependent. The configurations reported in Fig. 2 vary only in the way we craft the prompt, the LLM itself remains the same. As we can see, there is no clear winner among the configs.

- Discrepancy between human experts. I was surprised to see that the human experts do not assign matching, standard personality assessments across the subjects.

All in all, the results are promising, but we should be mindful of the limitations.

Utilizing the ChatGPT psychoanalyst

Personally, I fully expect the chatbot to dominate user-AI interaction in the foreseeable future. Of course, I am not saying that all AI will be LLMs—I simply believe most of the interaction between users and AI-powered systems will be through an LLM-equipped chatbot. Improving the chatbot’s understanding of the user has a great potential to enhance the quality, relevance, and pleasantness of the interaction.

Even though this is not really a security post, let’s briefly discuss the potential impact of “psychoanalytic” LLMs on security. As is always the case in security, new technology can be a boon for both attackers and defenders, and these enhanced LLMs are no exception. Attackers could for example leverage personality knowledge for better (spear)phishing. On the other hand, being able to assess the user’s personality can serve as “soft authentication”. An impostor trying to interact in another user’s stead must now match their personality profile, lest the LLM detects that the interactions do not come from who they should come from. In general, additional nuance in prompts & the user-model exchange will certainly inspire additional nuance and variance in prompt-based attacks, summarized in our LLM security taxonomy.

I think this is a very exciting research direction that may have a tremendous positive impact on human-AI interaction (if done right). I am excited to continue and further expand our collaboration with ELLIS Alicante (E. Derner & N. Oliver) and the University of South Bohemia (D. Kučera). I invite you to read the paper as well, and I will keep you posted about further developments!

Subscribe

Enjoying the blog? Subscribe to receive blog updates, post notifications, and monthly post summaries by e-mail.